|

64 Essential Testing Metrics for Measuring Quality Assurance Success. Software testing metrics are a way to measure and monitor your test activities. More importantly, they give insights into your team’s test progress, productivity, and the quality of the system under test. Software Testing Metrics and measurements are very important indicators of the efficiency and effectiveness of software testing processes. Learn with examples and graphs how to use test metrics and measurements in software testing process. Beginning with your bug list, learn root cause analysis, defect resolution, and how to plan and implement a meaningful metrics practice. Explore the successes and failures of the metrics process and see how to move from the concept of metrics to measurement becoming a valued part of your project and test planning activities.

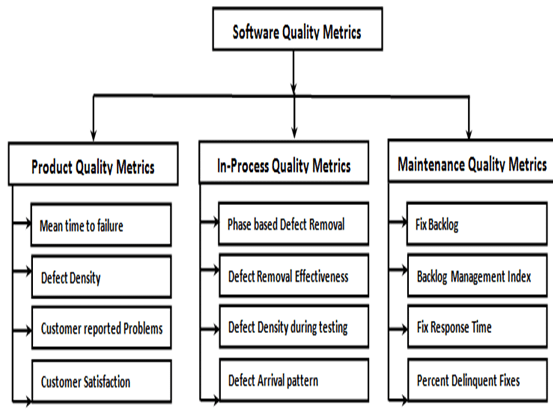

Software metrics can be classified into three categories −

Some metrics belong to multiple categories. For example, the in-process quality metrics of a project are both process metrics and project metrics.

Software quality metrics are a subset of software metrics that focus on the quality aspects of the product, process, and project. These are more closely associated with process and product metrics than with project metrics.

Software quality metrics can be further divided into three categories −

Product Quality Metrics

This metrics include the following −

Mean Time to Failure

It is the time between failures. This metric is mostly used with safety critical systems such as the airline traffic control systems, avionics, and weapons.

Defect Density

It measures the defects relative to the software size expressed as lines of code or function point, etc. i.e., it measures code quality per unit. This metric is used in many commercial software systems.

Customer Problems

It measures the problems that customers encounter when using the product. It contains the customer’s perspective towards the problem space of the software, which includes the non-defect oriented problems together with the defect problems.

The problems metric is usually expressed in terms of Problems per User-Month (PUM).

Where,

PUM is usually calculated for each month after the software is released to the market, and also for monthly averages by year.

Customer Satisfaction

Customer satisfaction is often measured by customer survey data through the five-point scale −

Satisfaction with the overall quality of the product and its specific dimensions is usually obtained through various methods of customer surveys. Based on the five-point-scale data, several metrics with slight variations can be constructed and used, depending on the purpose of analysis. For example −

Usually, this percent satisfaction is used.

In-process Quality Metrics

In-process quality metrics deals with the tracking of defect arrival during formal machine testing for some organizations. This metric includes −

Software Defect Metrics In Excel

Defect density during machine testing

Defect rate during formal machine testing (testing after code is integrated into the system library) is correlated with the defect rate in the field. Higher defect rates found during testing is an indicator that the software has experienced higher error injection during its development process, unless the higher testing defect rate is due to an extraordinary testing effort.

This simple metric of defects per KLOC or function point is a good indicator of quality, while the software is still being tested. It is especially useful to monitor subsequent releases of a product in the same development organization.

Defect arrival pattern during machine testing

The overall defect density during testing will provide only the summary of the defects. The pattern of defect arrivals gives more information about different quality levels in the field. It includes the following −

Phase-based defect removal pattern

This is an extension of the defect density metric during testing. In addition to testing, it tracks the defects at all phases of the development cycle, including the design reviews, code inspections, and formal verifications before testing.

Because a large percentage of programming defects is related to design problems, conducting formal reviews, or functional verifications to enhance the defect removal capability of the process at the front-end reduces error in the software. The pattern of phase-based defect removal reflects the overall defect removal ability of the development process.

With regard to the metrics for the design and coding phases, in addition to defect rates, many development organizations use metrics such as inspection coverage and inspection effort for in-process quality management.

Defect removal effectiveness

It can be defined as follows −

$$DRE = frac{Defect : removed : during : a : development:phase }{Defects: latent : in : the: product} times 100%$$

This metric can be calculated for the entire development process, for the front-end before code integration and for each phase. It is called early defect removal when used for the front-end and phase effectiveness for specific phases. The higher the value of the metric, the more effective the development process and the fewer the defects passed to the next phase or to the field. This metric is a key concept of the defect removal model for software development.

Maintenance Quality Metrics

Although much cannot be done to alter the quality of the product during this phase, following are the fixes that can be carried out to eliminate the defects as soon as possible with excellent fix quality.

Fix backlog and backlog management index

Fix backlog is related to the rate of defect arrivals and the rate at which fixes for reported problems become available. It is a simple count of reported problems that remain at the end of each month or each week. Using it in the format of a trend chart, this metric can provide meaningful information for managing the maintenance process.

Backlog Management Index (BMI) is used to manage the backlog of open and unresolved problems.

$$BMI = frac{Number : of : problems : closed : during :the :month }{Number : of : problems : arrived : during :the :month} times 100%$$

If BMI is larger than 100, it means the backlog is reduced. If BMI is less than 100, then the backlog increased.

Fix response time and fix responsiveness

The fix response time metric is usually calculated as the mean time of all problems from open to close. Short fix response time leads to customer satisfaction.

The important elements of fix responsiveness are customer expectations, the agreed-to fix time, and the ability to meet one's commitment to the customer.

Percent delinquent fixes

It is calculated as follows −

$Percent :Delinquent: Fixes =$

Cost Of Software Defects

$frac{Number : of : fixes : that: exceeded : the :response :time:criteria:by:ceverity:level}{Number : of : fixes : delivered : in :a :specified :time} times 100%$

Fix Quality

Fix quality or the number of defective fixes is another important quality metric for the maintenance phase. A fix is defective if it did not fix the reported problem, or if it fixed the original problem but injected a new defect. For mission-critical software, defective fixes are detrimental to customer satisfaction. The metric of percent defective fixes is the percentage of all fixes in a time interval that is defective.

A defective fix can be recorded in two ways: Record it in the month it was discovered or record it in the month the fix was delivered. The first is a customer measure; the second is a process measure. The difference between the two dates is the latent period of the defective fix.

Usually the longer the latency, the more will be the customers that get affected. If the number of defects is large, then the small value of the percentage metric will show an optimistic picture. The quality goal for the maintenance process, of course, is zero defective fixes without delinquency.

In software projects, it is most important to measure the quality, cost and effectiveness of the project and the processes. Without measuring these, a project can’t be completed successfully.

In today’s article, we will learn with examples and graphs – Software test metrics and measurements and how to use these in software testing process.

There is a famous statement: “We can’t control things which we can’t measure”.

Here controlling the projects means, how a project manager/lead can identify the deviations from the test plan ASAP in order to react in the perfect time. Generation of test metrics based on the project needs is very much important to achieve the quality of the software being tested.

What You Will Learn:

What is Software Testing Metrics?

A Metric is a quantitative measure of the degree to which a system, system component, or process possesses a given attribute.

Metrics can be defined as “STANDARDS OFMEASUREMENT”.

Software Metrics are used to measure the quality of the project. Simply, Metric is a unit used for describing an attribute. Metric is a scale for measurement.

Suppose, in general, “Kilogram” is a metric for measuring the attribute “Weight”. Similarly, in software, “How many issues are found in thousand lines of code?”, here No. of issues is one measurement & No. of lines of code is another measurement. Metric is defined from these two measurements.

Test metrics example:

What is Software Test Measurement?

Measurement is the quantitative indication of extent, amount, dimension, capacity, or size of some attribute of a product or process.

Test measurement example: Total number of defects.

Please refer below diagram for a clear understanding of the difference between Measurement & Metrics.

Why Test Metrics?

Generation of Software Test Metrics is the most important responsibility of the Software Test Lead/Manager.

Test Metrics are used to,

Importance of Software Testing Metrics:

As explained above, Test Metrics are the most important to measure the quality of the software.

Now, how can we measure the quality of the software by using Metrics?

Suppose, if a project does not have any metrics, then how the quality of the work done by a Test analyst will be measured?

For Example A Test Analyst has to,

In above scenario, if metrics are not followed, then the work completed by the test analyst will be subjective i.e. the test report will not have the proper information to know the status of his work/project.

If Metrics are involved in the project, then the exact status of his/her work with proper numbers/data can be published.

I.e. in the Test report, we can publish:

1. How many test cases have been designed per requirement? 2. How many test cases are yet to design? 3. How many test cases are executed? 4. How many test cases are passed/failed/blocked? 5. How many test cases are not yet executed? 6. How many defects are identified & what is the severity of those defects? 7. How many test cases are failed due to one particular defect? etc.

Based on the project needs we can have more metrics than an above mentioned list, to know the status of the project in detail.

Based on the above metrics, test lead/manager will get the understanding of the below mentioned key points.

a) %ge of work completed b) %ge of work yet to be completed c) Time to complete the remaining work d) Whether the project is going as per the schedule or lagging? etc.

Based on the metrics, if the project is not going to complete as per the schedule, then the manager will raise the alarm to the client and other stakeholders by providing the reasons for lagging to avoid the last minute surprises.

Metrics Life Cycle:Types of Manual Test Metrics:

Testing Metrics are mainly divided into 2 categories.

Base Metrics:

Base Metrics are the Metrics which are derived from the data gathered by the Test Analyst during the test case development and execution.

This data will be tracked throughout the Test Lifecycle. I.e. collecting the data like Total no. of test cases developed for a project (or) no. of test cases need to be executed (or) no. of test cases passed/failed/blocked etc.

Calculated Metrics:

Calculated Metrics are derived from the data gathered in Base Metrics. These Metrics are generally tracked by the test lead/manager for Test Reporting purpose. Examples of Software Testing Metrics:

Let’s take an example to calculate various test metrics used in software test reports:

Below is the table format for the data retrieved from the test analyst who is actually involved in testing:

Definitions and Formulas for Calculating Metrics:

#1) %ge Test cases Executed: This metric is used to obtain the execution status of the test cases in terms of %ge.

%ge Test cases Executed = (No. of Test cases executed / Total no. of Test cases written) * 100.

So, from the above data,

%ge Test cases Executed = (65 / 100) * 100 = 65%

#2) %ge Test cases not executed: This metric is used to obtain the pending execution status of the test cases in terms of %ge.

%ge Test cases not executed = (No. of Test cases not executed / Total no. of Test cases written) * 100.

So, from the above data,

%ge Test cases Blocked = (35 / 100) * 100 = 35%

#3) %ge Test cases Passed: This metric is used to obtain the Pass %ge of the executed test cases.

%ge Test cases Passed = (No. of Test cases Passed / Total no. of Test cases Executed) * 100.

So, from the above data,

%ge Test cases Passed = (30 / 65) * 100 = 46%

#4) %ge Test cases Failed: This metric is used to obtain the Fail %ge of the executed test cases.

%ge Test cases Failed = (No. of Test cases Failed / Total no. of Test cases Executed) * 100.

So, from the above data,

%ge Test cases Passed = (26 / 65) * 100 = 40%

#5) %ge Test cases Blocked: This metric is used to obtain the blocked %ge of the executed test cases. A detailed report can be submitted by specifying the actual reason of blocking the test cases.

%ge Test cases Blocked = (No. of Test cases Blocked / Total no. of Test cases Executed) * 100.

So, from the above data,

%ge Test cases Blocked = (9 / 65) * 100 = 14%

#6) Defect Density = No. of Defects identified / size

(Here “Size” is considered a requirement. Hence here the Defect Density is calculated as a number of defects identified per requirement. Similarly, Defect Density can be calculated as a number of Defects identified per 100 lines of code [OR] No. of defects identified per module etc.)

So, from the above data,

Defect Density = (30 / 5) = 6

#7) Defect Removal Efficiency (DRE) = (No. of Defects found during QA testing / (No. of Defects found during QA testing +No. of Defects found by End-user)) * 100

DRE is used to identify the test effectiveness of the system.

Suppose, During Development & QA testing, we have identified 100 defects. After the QA testing, during Alpha & Beta testing, end-user / client identified 40 defects, which could have been identified during QA testing phase.

Now, The DRE will be calculated as,

DRE = [100 / (100 + 40)] * 100 = [100 /140] * 100 = 71% Software Defect Metrics Pdf

$8) Defect Leakage: Defect Leakage is the Metric which is used to identify the efficiency of the QA testing i.e., how many defects are missed/slipped during the QA testing.

Defect Leakage = (No. of Defects found in UAT / No. of Defects found in QA testing.) * 100

Suppose, During Development & QA testing, we have identified 100 defects.

After the QA testing, during Alpha & Beta testing, end-user / client identified 40 defects, which could have been identified during QA testing phase.

Defect Leakage = (40 /100) * 100 = 40%

#9) Defects by Priority: This metric is used to identify the no. of defects identified based on the Severity / Priority of the defect which is used to decide the quality of the software.

%ge Critical Defects = No. of Critical Defects identified / Total no. of Defects identified * 100

From the data available in the above table, %ge Critical Defects = 6/ 30 * 100 = 20%

%ge High Defects = No. of High Defects identified / Total no. of Defects identified * 100

From the data available in the above table, %ge High Defects = 10/ 30 * 100 = 33.33%

%ge Medium Defects = No. of Medium Defects identified / Total no. of Defects identified * 100

From the data available in the above table, %ge Medium Defects = 6/ 30 * 100 = 20%

%ge Low Defects = No. of Low Defects identified / Total no. of Defects identified * 100

From the data available in the above table, %ge Low Defects = 8/ 30 * 100 = 27%

Recommended reading => How to Write an Effective Test Summary Report

Conclusion:Defect Metrics Template

The metrics provided in this article are majorly used for generating the daily/weekly status report with accurate data during test case development/execution phase & this is also useful for tracking the project status & Quality of the software.

About the author: This is a guest post by Anuradha K. She is having 7+ years of software testing experience and currently working as a consultant for an MNC. She is also having good knowledge of mobile automation testing.

Which other test metrics do you use in your project? As usual, let us know your thoughts/queries in comments below.

Recommended ReadingComments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

December 2020

Categories |

- Blog

- Home

- Elder Scrolls Iii Morrowind Gameplay

- Flatbush Zombies Free Download

- Download Hips Dont Lie Shakira

- English Subtitle Download For Movies

- Roland Garros Free Live Streaming

- Bobby Mcferrin Be Happy

- Naruto Vs Pain Episode

- 150 Gamehouse Full Version Downloads

- Free Music Download No Virus

- Pokemon Diamond Ds Download

- Download Sys Files

- Download Microsoft Word Resume Template

- How To Download Internet Drivers

- Sociology Theories Pdf

- Dev C 4.9.9.2 Free Download

- Pc Karaoke Game

- Acer C710 Drivers

- Bosch 12 Volt Impact Driver

- Adobe Illustrator Cs4 For Sale

- Free Serial Data Logger

- Free Pdf Reader

- Minecraft Server 1.5.2 Hunger Games

- Windows Raid 1

- Free Instagram Downloader

- Games For Nintendo Ds Emulator

- Cloudberry Backup Software

- How To Fix Svchost Exe

- Windows 10 Search Word Documents

- Mage The Ascension Revised Pdf

- 2001 Vw Passat Repair Manual

- Winrar For Pc Windows 7

- Samsung Rv510 Drivers Windows 7

- Download Miktex For Windows 10

- Donkey Kong Country Returns Free

- Acer Aspire Manuals Guides

- Turbotax Canada Free Edition

RSS Feed

RSS Feed